A layered training corpus — domain pairs, public ballast, and a reusable Postgres syntax corpus — is 80% of the work for a NL2SQL LoRA. The training config is YAML and patience.

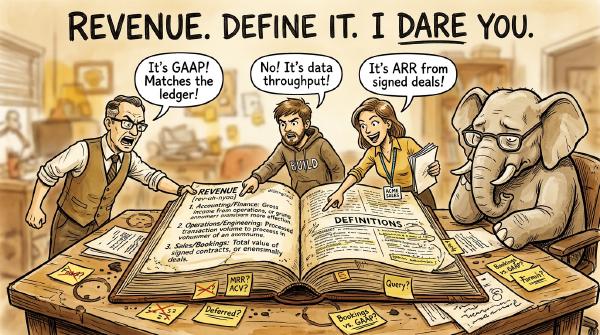

A step-by-step walkthrough of building a PostgreSQL semantic layer in pg_agents — crawl the schema, enrich it, define your vocabulary, lock down categoricals, and promote the queries that work.

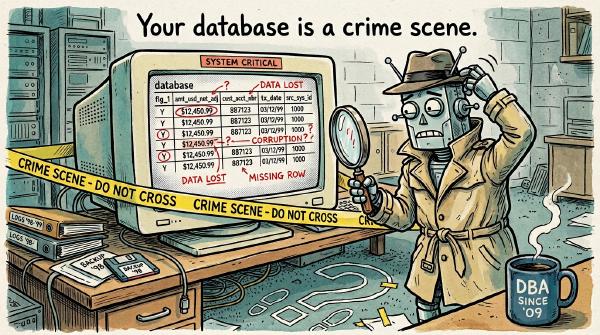

Raw LLMs hit 10-20% accuracy on real enterprise schemas with cryptic column names and tribal-knowledge joins. Here’s why, and the semantic-layer fix that takes you from toy to production.